...

- swrun -p <partition_name> -c <cpu_per_gpu> -t <walltime> -r <reservation_name>

- <partition_name> (required) : cpun1, cpun2, cpun4, cpun8, gpux1, gpux2, gpux3, gpux4, gpux8, gpux12, gpux16.

- <cpu_per_gpu> (optional) : 16 cpus (default), range from 16 cpus to 40 cpus.

- <walltime> (optional) : 4 hours (default), range from 1 hour to 24 hours in integer format.

- <reservation_name> (optional) : reservation name granted to user.

- example: swrun -p gpux4 -c 40 -t 24 (request a full node: 1x node, 4x gpus, 160x cpus, 24x hours)

- Using interactive jobs to run long-running scripts is not recommended. If you are going to walk away from your computer while your script is running, consider submitting a batch job. Unattended interactive sessions can remain idle until they run out of walltime and thus block out resources from other users. We will issue warnings when we find resource-heavy idle interactive sessions and repeated offenses may result in revocation of access rights.

- swbatch <run_script>

- <run_script> (required) : same as original slurm batch.

- <job_name> (optional) : job name.

- <output_file> (optional) : output file name.

- <error_file> (optional) : error file name.

- <partition_name> (required) : cpun1, cpun2, cpun4, cpun8, gpux1, gpux2, gpux3, gpux4, gpux8, gpux12, gpux16.

- <cpu_per_gpu> (optional) : 16 cpus (default), range from 16 cpus to 40 cpus.

- <walltime> (optional) : 24 hours (default), range from 1 hour to 24 hours in integer format.

- <reservation_name> (optional) : reservation name granted to user.

example: swbatch demo.swb

Code Block language bash title demo.swb #!/bin/bash #SBATCH --job-name="demo" #SBATCH --output="demo.%j.%N.out" #SBATCH --error="demo.%j.%N.err" #SBATCH --partition=gpux1 #SBATCH --time=4 srun hostname

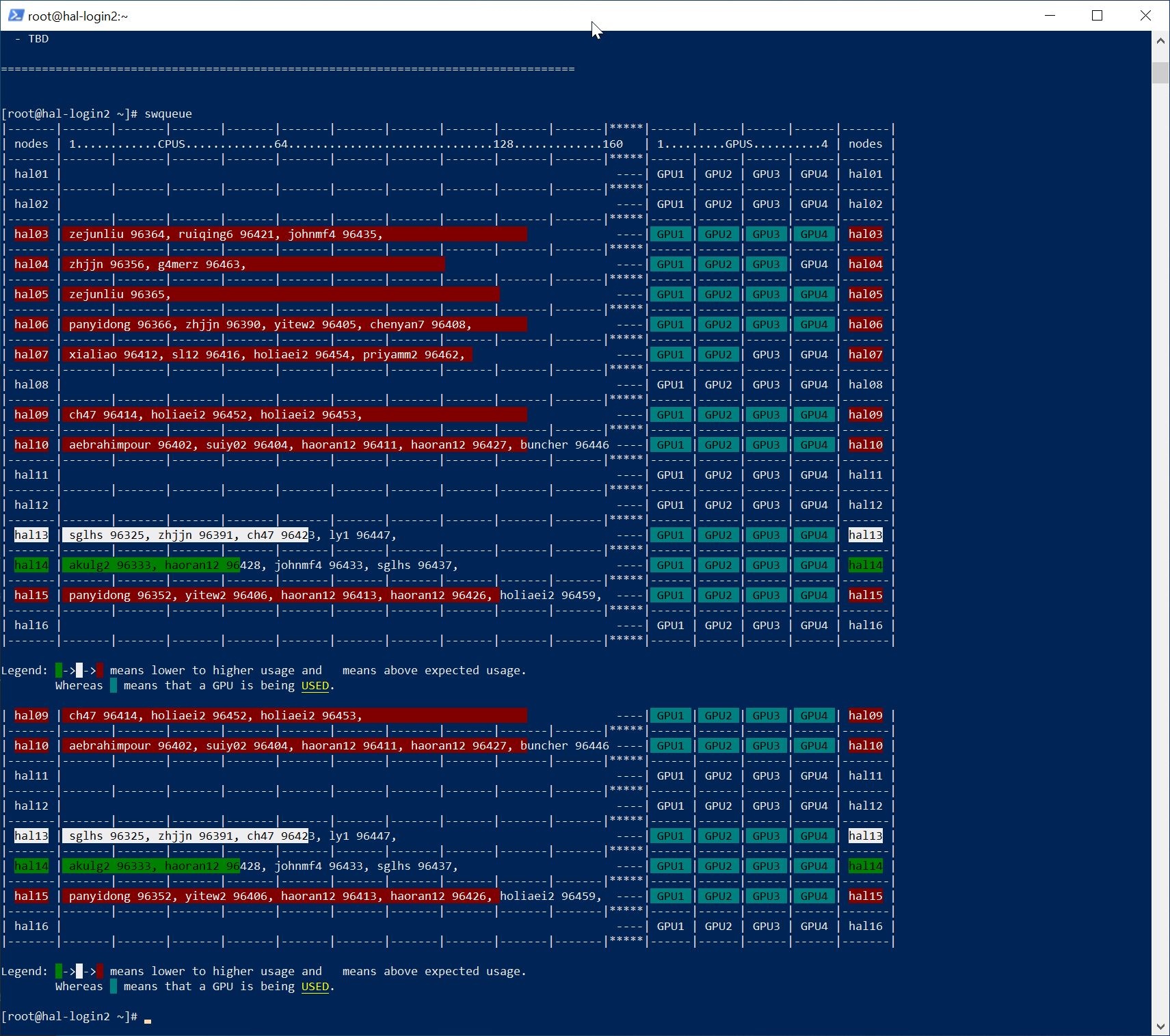

- swqueue

- example: swqueue

New Job Queues (SWSuite only)

| Info | ||

|---|---|---|

| ||

| Partition Name | Priority | Max Walltime | Nodes Allowed | Min-Max CPUs Per Node Allowed | Min-Max Mem Per Node Allowed | GPU Allowed | Local Scratch | Description |

|---|---|---|---|---|---|---|---|---|

| gpux1 | normal | 24 hrs | 1 | 16-40 | 19.2-48 GB | 1 | none | designed to access 1 GPU on 1 node to run sequential and/or parallel jobs. |

| gpux2 | normal | 24 hrs | 1 | 32-80 | 38.4-96 GB | 2 | none | designed to access 2 GPUs on 1 node to run sequential and/or parallel jobs. |

| gpux3 | normal | 24 hrs | 1 | 48-120 | 57.6-144 GB | 3 | none | designed to access 3 GPUs on 1 node to run sequential and/or parallel jobs. |

| gpux4 | normal | 24 hrs | 1 | 64-160 | 76.8-192 GB | 4 | none | designed to access 4 GPUs on 1 node to run sequential and/or parallel jobs. |

| gpux8 | normal | 24 hrs | 2 | 64-160 | 76.8-192 GB | 8 | none | designed to access 8 GPUs on 2 nodes to run sequential and/or parallel jobs. |

| gpux12 | normal | 24 hrs | 3 | 64-160 | 76.8-192 GB | 12 | none | designed to access 12 GPUs on 3 nodes to run sequential and/or parallel jobs. |

| gpux16 | normal | 24 hrs | 4 | 64-160 | 76.8-192 GB | 16 | none | designed to access 16 GPUs on 4 nodes to run sequential and/or parallel jobs. |

| cpun1 | normal | 24 hrs | 1 | 96-96 | 115.2-115.2 GB | 0 | none | designed to access 96 CPUs on 1 node to run sequential and/or parallel jobs. |

| cpun2 | normal | 24 hrs | 2 | 96-96 | 115.2-115.2 GB | 0 | none | designed to access 96 CPUs on 2 nodes to run sequential and/or parallel jobs. |

| cpun4 | normal | 24 hrs | 4 | 96-96 | 115.2-115.2 GB | 0 | none | designed to access 96 CPUs on 4 nodes to run sequential and/or parallel jobs. |

| cpun8 | normal | 24 hrs | 8 | 96-96 | 115.2-115.2 GB | 0 | none | designed to access 96 CPUs on 8 nodes to run sequential and/or parallel jobs. |

| cpun16 | normal | 24 hrs | 16 | 96-96 | 115.2-115.2 GB | 0 | none | designed to access 96 CPUs on 16 nodes to run sequential and/or parallel jobs. |

| cpu_mini | normal | 24 hrs | 1 | 8-8 | 9.6-9.6 GB | 0 | none | designed to access 8 CPUs on 1 node to run tensorboard jobs. |

...

| Code Block |

|---|

srun --partition=debug --pty --nodes=1 \

--ntasks-per-node=16 --cores-per-socket=4 \

--threads-per-core=4 --sockets-per-node=1 \

--mem-per-cpu=15001200 --gres=gpu:v100:1 \

--time 01:30:00 --wait=0 \

--export=ALL /bin/bash |

...