Components

Tape Library

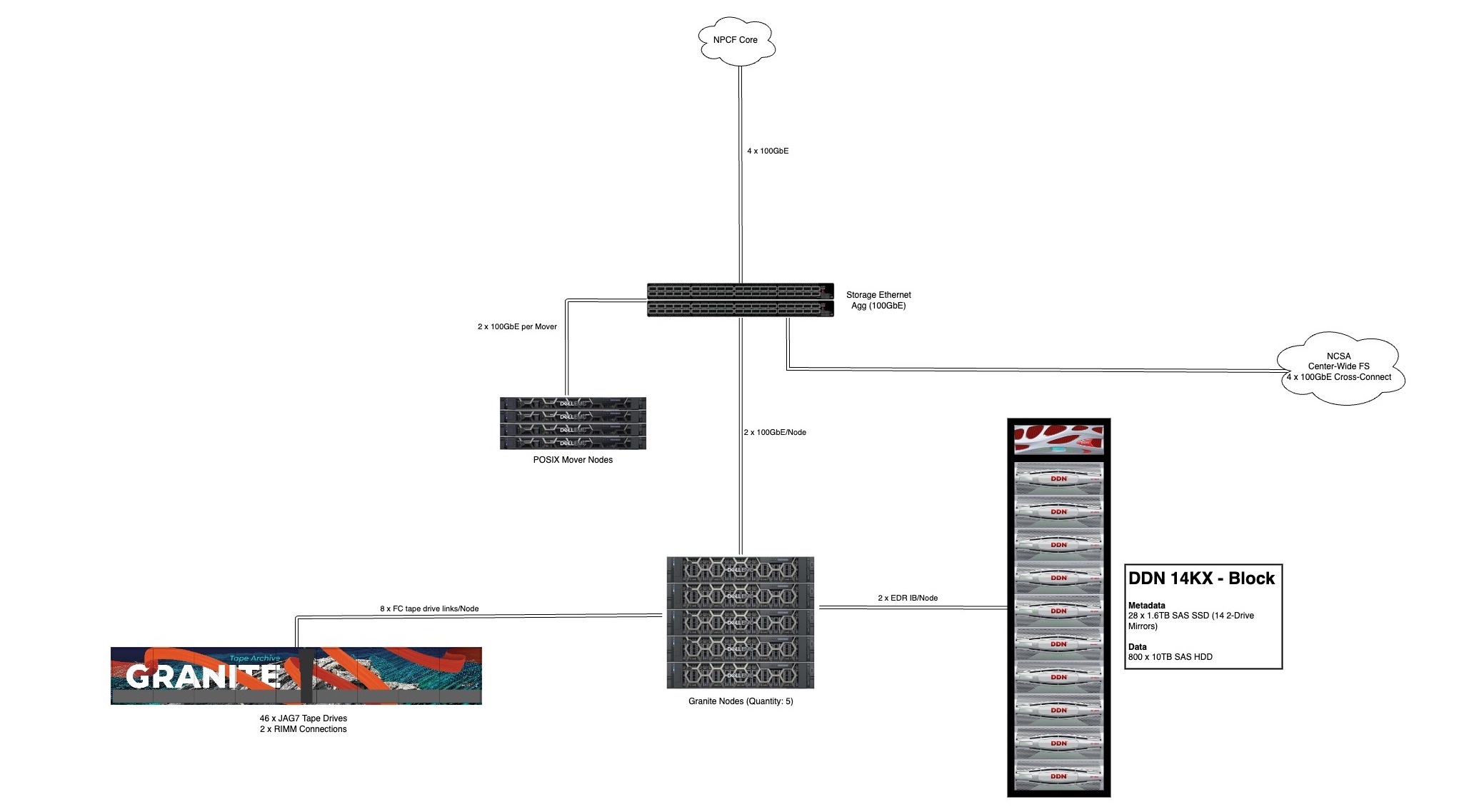

Granite is made up of a single Spectra TFinity library. This 19-Frame library is currently capable of holding ~65PB of storage within the unit, leveraging 30 TS1140 tape drives to transfer data to over 9000 IBM 3592-JC tapes.

Granite Mover Nodes

Four nodes currently form Granite's server infrastructure; Each node connects over direct Fiber Channel connections to 8 tape interfaces on the tape library(30 Drives - 2 Robotic controllers).

Each Granite node is also connected at 2x100GbE to the SET aggregation which allows also allows a 2x100GbE the NPCF core network. This is also leveraged as the NFS mount point that will server Globus data movers and other mount points to access the archive.

Disk Cache

The archive disk cache is where all data will need to land to be ingested or extracted from the archive. This is currently made up of a DDN SFA 14KX unit with a mix of SAS SSDs (metadata) and SAS HDDs (capacity), putting ~2PB of disk cache in front of the archive.

Cluster Export Nodes

The tape archive is mounted via NFS on to the Globus export nodes directly so they have direct access to the archive. Since Granite shares its export nodes with Taiga, this allows for quicker and more direct Globus transfers between Taiga and Granite.